NQSV scheduler

SX-Aurora TSUBASA series has a variety of modes from edge model to data center model. The edge model is a tower model with 1 Vector Engine and can be used like a personal terminal. The on-site and datacenter model is more expensive, with multiple Vector Engines on a single server, and require multiple people to share them. Even with a small number of people sharing, coordination between users, such as duration of use and Vector Engines, can be very difficult.

The software that solves this problem is the job scheduler.

NEC provides a Job Scheduler (NQSV) that can be available for efficient operation and management of SX-Aurora TSUBASA. Of course, you can use the job scheduler not only on the data center model, but also on the edge model.

NEC Network Queuing System V (NQSV) is a job management software that supports from single node to large scale cluster and multiple cluster systems.

End users can operate with the same user interface without considering the execution environment of the job of hetero clusters.

The concept of NQSV is as follows.

High operation rate

By Strong Backfill, job operations with high operation rates are realized. By fully utilizing the resources, there is also a power saving effect.

The following are the main features.

- Intelligent backfill scheduling

- Assure job execution start time

- Planned power saving operation

Easy high performance

The resource assignment considering the various topologies brings out application performance.

The following are the main features.

- PCIe topology aware scheduling

- Network topology aware scheduling

Various execution environments

In addition to SX-Aurora TSUBASA, NQSV can manage Xeon CPUs and GPUs.

It supports the most suitable execution environment for various jobs such as interactive job, workflow, OpenStack and Docker collaboration.

The following are the main features.

- Features specialized for SX-Aurora TSUBASA

- Failure management support

- Interactive job, workflow, OpenStack and Docker collaboration

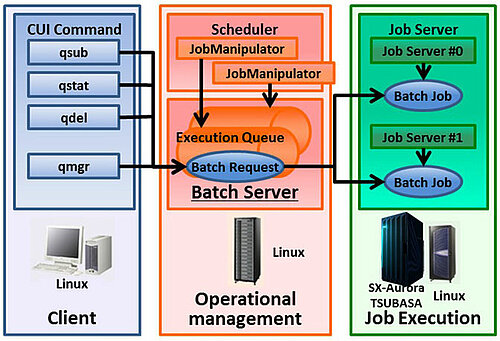

The NQSV system mainly consists of the following three components.

Client

The Client consists of CUI-based commands, including the qsub command for submitting jobs, qstat commands for referring job information, and qmgr command which allows you to configure Operation Management remotely. Users submit jobs and check the status from the Client.

Operation Management

Operation management consists of a daemon process (called Batch Server) that manages jobs in an integrated management and a daemon process (called JobManipulator) that schedules jobs. NQSV manages a Batch Request which consists of jobs (called Batch Job) to be executed on the hosts.

Job Execution

Job Execution consists of a daemon process (called Job Server) that manages the execution of the job. The user submits a job from the Client to the Batch Server as a Batch Request. Then JobManipulator determines the host and schedules the execution time. At the scheduled execution time, NQSV starts the Batch Job on the executing host. And when the Batch Job finishes, NQSV returns the results to the user. When a system is shared by multiple users, each user simply submits a job, and the job can be run in an appropriate environment according to the user's requirements without having to make adjustments themselves. This technical article describes the core NQSV technology, job scheduling.

NQSV supports the following scheduling function.

- Backfill Scheduling

- Power Saving Scheduling

- VE concentration Scheduling

- Network Topology aware Scheduling

NQSV supports First In First Out (FIFO) Scheduling and Priority Based Scheduling, as well as the powerful Backfill Scheduling. With FIFO Scheduling and Priority Based Scheduling, when a large job is submitted, there will be free nodes until the job is executed. Backfill Scheduling is scheduling to run subsequent jobs ahead of time to fill that the free nodes and increase uptime. In Backfill Scheduling of NQSV, assign jobs to the right slot (Vector Engine node and time) considering execution time and required resource declared by the user. Then, inherit the intelligent resource scheduling we have been developing to maximize the system operation rate.

The resources that are available for Backfill Scheduling are Vector Host resources (elapsed time, CPU cores, and memory) and Vector Engine. In the example, when you submit a small job that uses 3VEs, Backfill Scheduling allows you to fill in the gaps by assigning other jobs ahead of them. As a feature to improve the availability of NQSV's Backfill Scheduling, it has a pre- and post-check policy.

Backfill Scheduling

- Assign a job to the right slot (VE node and time) considering execution time and required resource declared by the user

- Inherit the intelligent resource scheduling we have been developing to maximize system operation rate

Backfill specifiable resource

|

Example: Job E: 16VE node job $ qsub -- venode=16 test.sh Job F: (3VE node + 4CPU) x 2virtual host $ qsub –b 2 --venum_lhost=3 --cpunum_lhost=4 |

The Pre- and Post-check policy assigns jobs close to the number of nodes in the forward job to the backward one. This keeps the free space contiguous and makes it easier to fill subsequent jobs.

If a job finishes ahead of its scheduled time, subsequent jobs of the same size can run faster, increasing the throughput of the job.

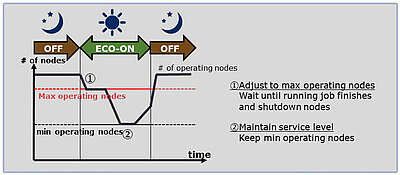

Power Saving Scheduling

- Power peak cut by specifying max operating nodes

- Constrain system power consumption

- Automatic power saving operation in accordance with job demand variation

- Detect slot where the job is not scheduled

- Automatically determine node start-up and shutdown between Max/Min operating node

- Shutdown node by detecting job unscheduled node

- Start-up node by calculating required resource of job waiting in queue

NQSV has Power Saving Scheduling to turn off unnecessary nodes to save power. You can set the nodes to run at maximum to cut the power consumption. This will save power in the system because no more nodes will be active than you set up. You can also set up a minimum number of active nodes. By leaving some nodes running, you can have nodes ready to run jobs immediately.

NQSV has Vector Engine (VE) Concentration Scheduling, which is unique to SX-Aurora TSUBASA. NQSV, by default, schedules VE and HCA to be closer together and less contentious for Vector Host resources in order to prioritize the performance of the job. On the other hand, there are cases where the distance between the VE and the HCA does not affect the performance, such as in the case of running a large number of single-node jobs. In this case, this feature increases the number of nodes that can be shut down, making Power Saving Scheduling more effective.

The difference in this scheduling is illustrated in the example. If three 12VE requests and one 2VE request are submitted, there are no free nodes in the default scheduling, whereas VE Concentration Scheduling creates one free node.

This one node can be shut down by Power Saving Scheduling to save power.

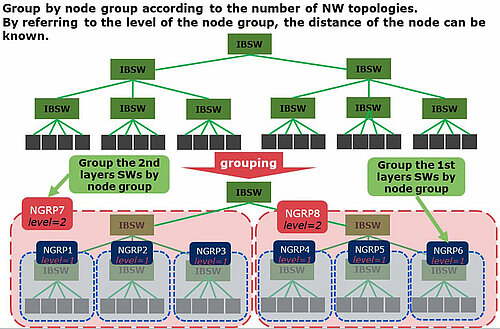

NQSV has Network Topology aware Scheduling to maximize the performance of communication-intensive jobs. Group by node group according to the number of NW topologies. By referring to the level of the node group, the distance of the node can be known. The figure shows an example for the FAT tree case. The network can be represented by grouping the nodes under each switch. In addition, NQSV can set whether or not to close the job placement to the network.

By closing and assigning to the node group of NW topology, the Number of times the job crosses the SW is kept to a minimum. In scheduling closed to the network, as in the example, when scheduling in the same time period as Job1 and Job2, Job3 will cross the level2 node group, so Job3 will be scheduled in the back time period.

- Intel and Xeon are trademarks of Intel Corporation in the U.S. and/or other countries.

- NVIDIA and Tesla are trademarks and/or registered trademarks of NVIDIA Corporation in the U.S. and other countries.

- Linux is a trademark or a registered trademark of Linus Torvalds in the U.S. and other countries.

- Proper nouns such as product names are registered trademarks or trademarks of individual manufacturers.